# Create a new annotation

Source: https://docs.avidoai.com/api-reference/annotations/create-a-new-annotation

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/annotations

Creates a new annotation.

# Delete an annotation

Source: https://docs.avidoai.com/api-reference/annotations/delete-an-annotation

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml delete /v0/annotations/{id}

Deletes an existing annotation.

# Get a single annotation by ID

Source: https://docs.avidoai.com/api-reference/annotations/get-a-single-annotation-by-id

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/annotations/{id}

Retrieves detailed information about a specific annotation.

# List annotations

Source: https://docs.avidoai.com/api-reference/annotations/list-annotations

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/annotations

Retrieves a paginated list of annotations with optional filtering.

# Update an annotation

Source: https://docs.avidoai.com/api-reference/annotations/update-an-annotation

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/annotations/{id}

Updates an existing annotation.

# Get app config

Source: https://docs.avidoai.com/api-reference/app-config/get-app-config

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/app-config

Returns public feature flags the dashboard needs to render correctly (e.g., whether the web scraper is enabled).

# Create a new application

Source: https://docs.avidoai.com/api-reference/applications/create-a-new-application

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/applications

Creates a new application configuration.

# Create an API key for an application

Source: https://docs.avidoai.com/api-reference/applications/create-an-api-key-for-an-application

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/applications/{id}/api-keys

Creates a new API key for the specified application. The plaintext key is returned only once and must be stored securely.

# Delete an API key

Source: https://docs.avidoai.com/api-reference/applications/delete-an-api-key

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml delete /v0/applications/{id}/api-keys/{keyId}

Deletes an API key for the specified application. Idempotent — returns 204 whether or not the key existed.

# Get a single application by ID

Source: https://docs.avidoai.com/api-reference/applications/get-a-single-application-by-id

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/applications/{id}

Retrieves detailed information about a specific application.

# Get a single application by slug

Source: https://docs.avidoai.com/api-reference/applications/get-a-single-application-by-slug

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/applications/by-slug/{slug}

Retrieves detailed information about a specific application by its slug.

# List API keys for an application

Source: https://docs.avidoai.com/api-reference/applications/list-api-keys-for-an-application

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/applications/{id}/api-keys

Retrieves all API keys associated with a specific application.

# List applications

Source: https://docs.avidoai.com/api-reference/applications/list-applications

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/applications

Retrieves a paginated list of applications with optional filtering.

# Bulk create assignments

Source: https://docs.avidoai.com/api-reference/assignments/bulk-create-assignments

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/quickstarts/{id}/assignments/bulk

Creates multiple assignments in a single request.

# Bulk update assignments

Source: https://docs.avidoai.com/api-reference/assignments/bulk-update-assignments

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/quickstarts/{id}/assignments/bulk

Updates multiple assignments in a single request.

# Create a new assignment

Source: https://docs.avidoai.com/api-reference/assignments/create-a-new-assignment

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/quickstarts/{id}/assignments

Creates a new assignment linking a user to a topic.

# Delete an assignment

Source: https://docs.avidoai.com/api-reference/assignments/delete-an-assignment

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml delete /v0/quickstarts/{id}/assignments/{assignmentId}

Deletes an assignment.

# Get a single assignment by ID

Source: https://docs.avidoai.com/api-reference/assignments/get-a-single-assignment-by-id

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/quickstarts/{id}/assignments/{assignmentId}

Retrieves detailed information about a specific assignment.

# List assignments for a quickstart

Source: https://docs.avidoai.com/api-reference/assignments/list-assignments-for-a-quickstart

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/quickstarts/{id}/assignments

Retrieves a paginated list of assignments for a quickstart with optional filtering.

# Update an assignment

Source: https://docs.avidoai.com/api-reference/assignments/update-an-assignment

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/quickstarts/{id}/assignments/{assignmentId}

Updates an existing assignment.

# Stream assistant response

Source: https://docs.avidoai.com/api-reference/assistant/stream-assistant-response

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/assistant/chat

Streams assistant output and tool execution events as an SSE UI message stream.

# Get current session

Source: https://docs.avidoai.com/api-reference/authentication/get-current-session

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/auth/session

Returns the currently authenticated session for cookie-based callers. Used by the apps/app frontend to centralise session validation through the API rather than calling Better Auth directly. Authenticated by Better Auth session cookie on the parent domain — NOT by API key / application id like the rest of the v0 API.

# List enabled auth providers

Source: https://docs.avidoai.com/api-reference/authentication/list-enabled-auth-providers

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/auth/providers

Returns which auth providers are currently configured on the API server: the set of SSO social providers (derived from `GOOGLE_CLIENT_ID/SECRET`, `MICROSOFT_CLIENT_ID/SECRET`) and whether an email provider (Loops) is configured via `LOOPS_API_KEY`. This endpoint is unauthenticated so that the login UI can render the correct set of provider buttons before the user signs in.

# List organizations for current session

Source: https://docs.avidoai.com/api-reference/authentication/list-organizations-for-current-session

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/auth/organizations

Returns the organisations the authenticated user belongs to. Called in-process via `auth.api.listOrganizations` so server-to-server requests are not subject to Better Auth's `Origin`-header CSRF check. Cookie-authenticated: NOT part of the public API key / application-id contract.

# Set active organization for current session

Source: https://docs.avidoai.com/api-reference/authentication/set-active-organization-for-current-session

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/auth/organizations/set-active

Switches the active organisation on the authenticated session. Called in-process via `auth.api.setActiveOrganization` so server-to-server requests are not subject to Better Auth's `Origin`-header CSRF check. Better Auth rotates the active-org cookie; the rotated `Set-Cookie` headers are forwarded on the response so the browser's cookie jar is updated atomically with the 201 reply.

# Delete a contradiction

Source: https://docs.avidoai.com/api-reference/contradictions/delete-a-contradiction

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml delete /v0/quickstarts/{id}/contradictions/{contradictionId}

Deletes a contradiction.

# List contradictions for a quickstart

Source: https://docs.avidoai.com/api-reference/contradictions/list-contradictions-for-a-quickstart

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/quickstarts/{id}/contradictions

Retrieves a paginated list of contradictions for the given quickstart with optional filtering.

# Update a contradiction

Source: https://docs.avidoai.com/api-reference/contradictions/update-a-contradiction

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/quickstarts/{id}/contradictions/{contradictionId}

Updates an existing contradiction (e.g. assign a user or mark as resolved).

# Get a conversation

Source: https://docs.avidoai.com/api-reference/conversations/get-a-conversation

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/conversations/{id}

Retrieves a single conversation by ID, including its messages.

# List conversations

Source: https://docs.avidoai.com/api-reference/conversations/list-conversations

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/conversations

Retrieves a paginated list of conversations with optional filtering. When includeMessages=true is passed with a taskId, each conversation includes its messages.

# Upload conversations via CSV file

Source: https://docs.avidoai.com/api-reference/conversations/upload-conversations-via-csv-file

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/conversations/upload

Uploads a CSV file containing conversations. The file will be validated and, unless processFile is false, processed asynchronously. Conversations are application-scoped and require an application context.

# List document chunks

Source: https://docs.avidoai.com/api-reference/document-chunks/list-document-chunks

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/documents/chunked

Retrieves a paginated list of document chunks with optional filtering by document ID.

# Get tags for a document

Source: https://docs.avidoai.com/api-reference/document-tags/get-tags-for-a-document

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/documents/{id}/tags

Retrieves all tags assigned to a specific document.

# Update document tags

Source: https://docs.avidoai.com/api-reference/document-tags/update-document-tags

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/documents/{id}/tags

Updates the tags assigned to a specific document. This replaces all existing tags.

# Delete unmapped document

Source: https://docs.avidoai.com/api-reference/document-tests/delete-unmapped-document

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml delete /v0/documents/tests/{testId}/coverage/unmapped-documents/{documentId}

Deletes a document from the application and removes it from the unmapped documents list in a knowledge coverage test result

# List document tests

Source: https://docs.avidoai.com/api-reference/document-tests/list-document-tests

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/documents/tests

Retrieves a paginated list of document tests with optional filtering by type and status

# Remove unmapped document from results

Source: https://docs.avidoai.com/api-reference/document-tests/remove-unmapped-document-from-results

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/documents/tests/{testId}/coverage/unmapped-documents/{documentId}

Removes a document from the unmapped documents list in a knowledge coverage test result without deleting the document itself

# Trigger document test

Source: https://docs.avidoai.com/api-reference/document-tests/trigger-document-test

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/documents/tests/trigger

Creates and triggers a document test execution. For KNOWLEDGE_COVERAGE and DOCS_TO_TASKS_MAPPING, applicationId is required.

# Activate a specific version of a document

Source: https://docs.avidoai.com/api-reference/document-versions/activate-a-specific-version-of-a-document

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/documents/{id}/versions/{versionNumber}/activate

Makes a specific version the active version of a document. This is the version that will be returned by default when fetching the document.

# Create a new version of a document

Source: https://docs.avidoai.com/api-reference/document-versions/create-a-new-version-of-a-document

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/documents/{id}/versions

Creates a new version of an existing document. The new version will have the next version number.

# Get a specific version of a document

Source: https://docs.avidoai.com/api-reference/document-versions/get-a-specific-version-of-a-document

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/documents/{id}/versions/{versionNumber}

Retrieves a specific version of a document by version number.

# List all versions of a document

Source: https://docs.avidoai.com/api-reference/document-versions/list-all-versions-of-a-document

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/documents/{id}/versions

Retrieves all versions of a specific document, ordered by version number descending.

# Add tags to multiple documents

Source: https://docs.avidoai.com/api-reference/documents/add-tags-to-multiple-documents

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/documents/tags

Add one or more tags to multiple documents in a single request. All documents and tags must exist and belong to the same organization.

# Assign a document to a user

Source: https://docs.avidoai.com/api-reference/documents/assign-a-document-to-a-user

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/documents/{id}/assign

Assigns a specific document to a user by their user ID.

# Bulk activate latest versions of multiple documents

Source: https://docs.avidoai.com/api-reference/documents/bulk-activate-latest-versions-of-multiple-documents

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/documents/activate-latest

Activates the latest version (highest version number) for multiple documents. Returns information about which documents were successfully activated and which failed.

# Bulk export documents as CSV

Source: https://docs.avidoai.com/api-reference/documents/bulk-export-documents-as-csv

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/documents/export/csv

Exports selected documents as a CSV file with title and content columns from active versions

# Bulk optimize multiple documents

Source: https://docs.avidoai.com/api-reference/documents/bulk-optimize-multiple-documents

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/documents/optimize

Triggers background optimization jobs for multiple documents. Returns information about which documents were successfully queued and which failed.

# Bulk update status of multiple document versions

Source: https://docs.avidoai.com/api-reference/documents/bulk-update-status-of-multiple-document-versions

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/documents/status

Updates the status of the active version for multiple documents.

# Create a new document

Source: https://docs.avidoai.com/api-reference/documents/create-a-new-document

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/documents

Creates a new document with the provided information.

# Delete a document

Source: https://docs.avidoai.com/api-reference/documents/delete-a-document

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml delete /v0/documents/{id}

Deletes a document and its related document versions by the given document ID

# Delete multiple documents

Source: https://docs.avidoai.com/api-reference/documents/delete-multiple-documents

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/documents/delete

Deletes multiple documents by ID. This will also delete their versions.

# Get a single document by ID

Source: https://docs.avidoai.com/api-reference/documents/get-a-single-document-by-id

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/documents/{id}

Retrieves detailed information about a specific document, including its parent-child relationships and active version details.

# Get all document IDs

Source: https://docs.avidoai.com/api-reference/documents/get-all-document-ids

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/documents/ids

Fetches all document IDs without pagination. Supports filtering by status and tags. Useful for bulk operations.

# Get document counts

Source: https://docs.avidoai.com/api-reference/documents/get-document-counts

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/documents/count

Returns total document count and count of documents that have an active version.

# Get document counts by assignee

Source: https://docs.avidoai.com/api-reference/documents/get-document-counts-by-assignee

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/documents/count-by-assignee

Retrieves document counts grouped by assignee for the authenticated organization.

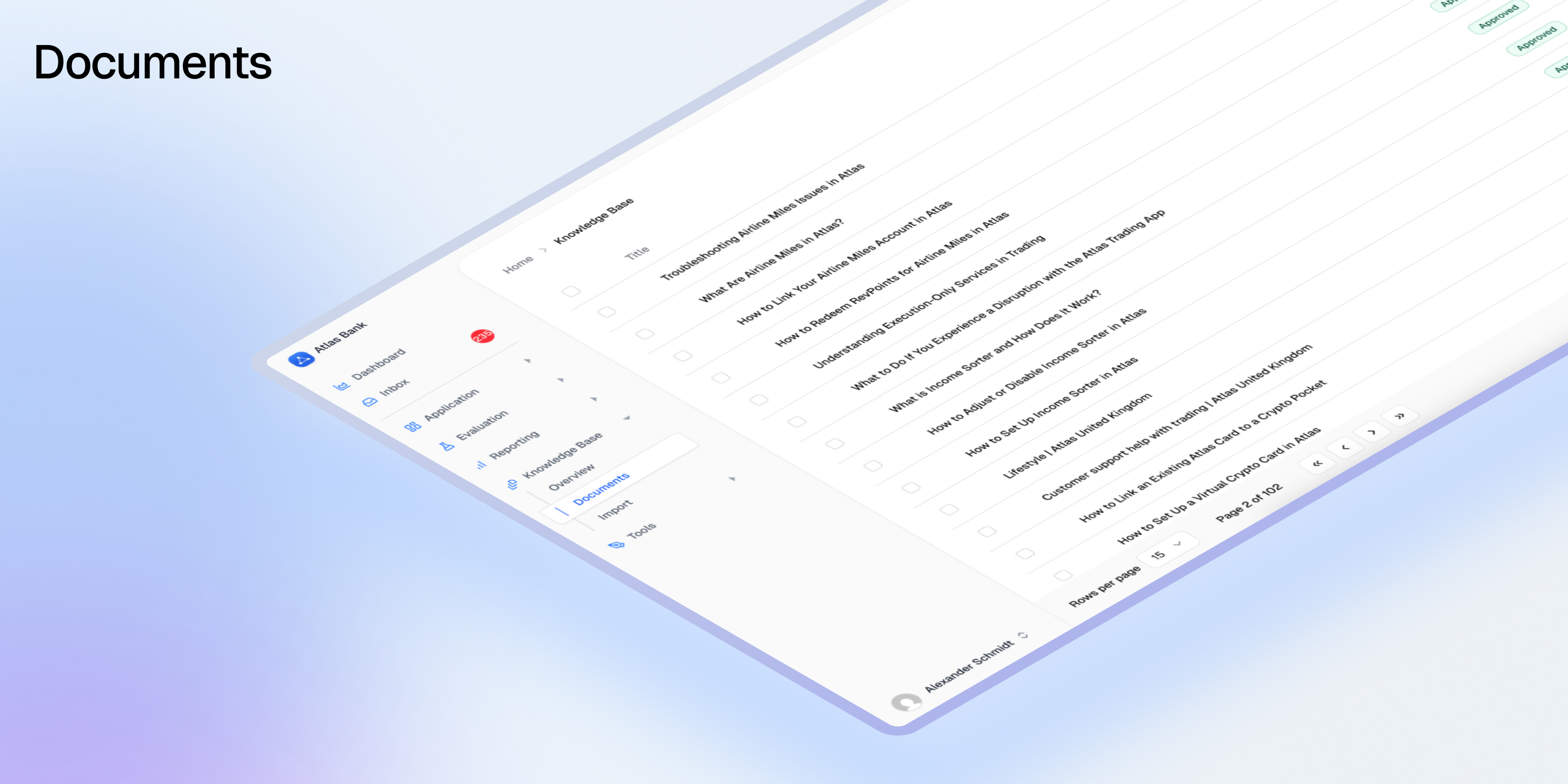

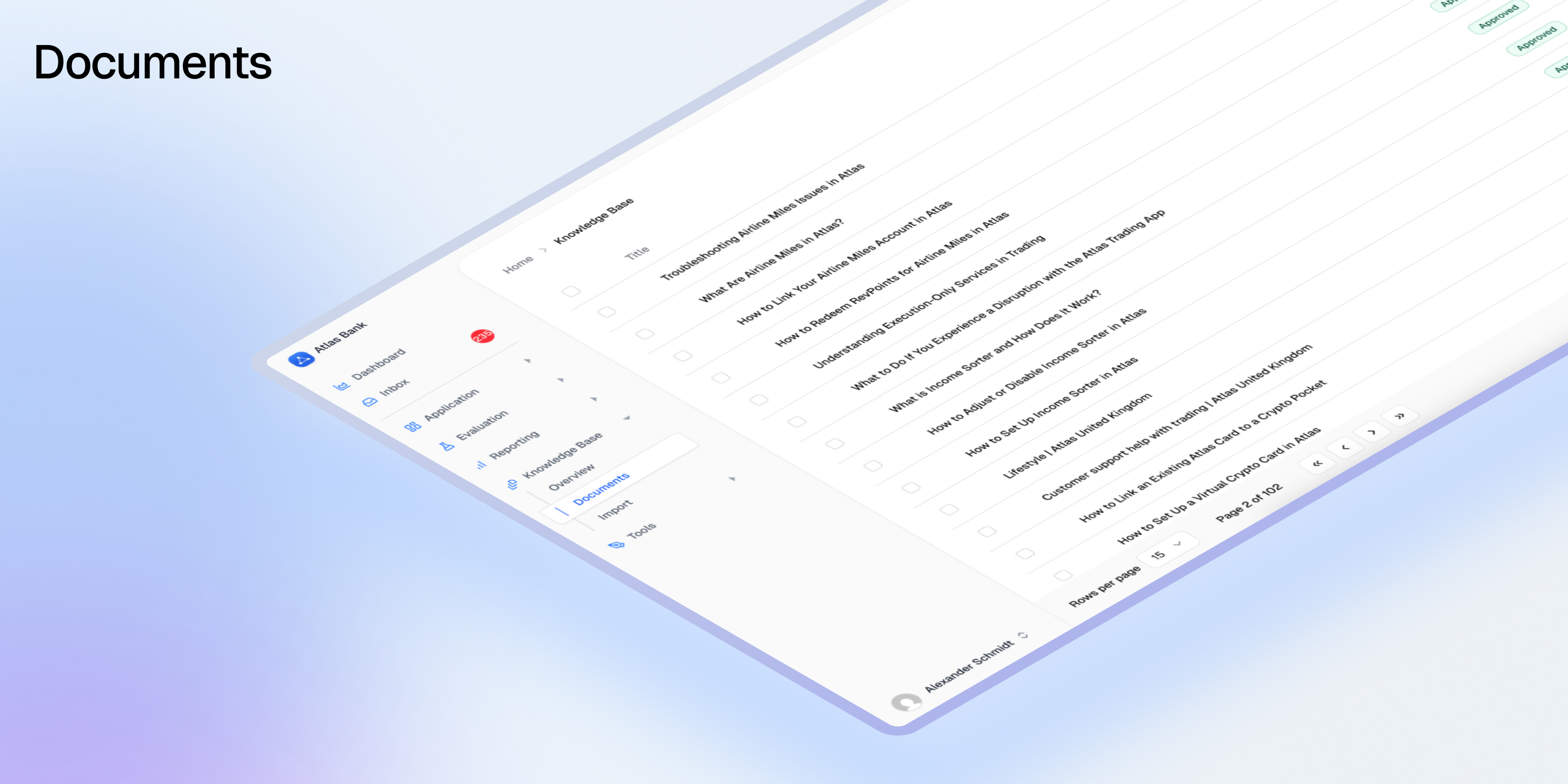

# List documents

Source: https://docs.avidoai.com/api-reference/documents/list-documents

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/documents

Retrieves a paginated list of documents with optional filtering by status, assignee, parent, and other criteria. Only returns documents with active approved versions unless otherwise specified.

# Optimize a document

Source: https://docs.avidoai.com/api-reference/documents/optimize-a-document

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/documents/{id}/optimize

Triggers a background optimization job for the specified document. Returns 204 No Content on success.

# Update a document version

Source: https://docs.avidoai.com/api-reference/documents/update-a-document-version

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/documents/{id}/versions/{versionNumber}

Updates the content, title, status, or other fields of a specific document version.

# Update a document's topic

Source: https://docs.avidoai.com/api-reference/documents/update-a-documents-topic

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/documents/{id}

Updates the topic assignment for a specific document. Set topicId to null to remove the topic.

# Upload documents via CSV or PDF file

Source: https://docs.avidoai.com/api-reference/documents/upload-documents-via-csv-or-pdf-file

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/documents/upload

Uploads a CSV or PDF file containing documents. CSV files will be validated and processed. PDF files will be processed via OCR. Unless processFile is set to false, processing happens asynchronously.

# Create an evaluation definition

Source: https://docs.avidoai.com/api-reference/eval-definitions/create-an-evaluation-definition

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/definitions

Creates a new evaluation definition for an application.

# Delete an evaluation definition

Source: https://docs.avidoai.com/api-reference/eval-definitions/delete-an-evaluation-definition

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml delete /v0/definitions/{id}

Deletes an evaluation definition and all associated data (cascade delete of linked tasks and evaluations).

# Get application-level evaluation definitions

Source: https://docs.avidoai.com/api-reference/eval-definitions/get-application-level-evaluation-definitions

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/definitions/application

Retrieves all evaluation definitions linked at the application level.

# Link an evaluation definition to a task

Source: https://docs.avidoai.com/api-reference/eval-definitions/link-an-evaluation-definition-to-a-task

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/definitions/link

Associates an evaluation definition with a task for automatic evaluation.

# Link evaluation definitions to the application

Source: https://docs.avidoai.com/api-reference/eval-definitions/link-evaluation-definitions-to-the-application

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/definitions/link-application

Associates evaluation definitions with the application for automatic evaluation of all tasks.

# Link evaluation definitions to topics

Source: https://docs.avidoai.com/api-reference/eval-definitions/link-evaluation-definitions-to-topics

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/definitions/link-topics

Associates evaluation definitions with topics for automatic evaluation of all tasks in those topics.

# List evaluation definitions

Source: https://docs.avidoai.com/api-reference/eval-definitions/list-evaluation-definitions

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/definitions

Retrieves a paginated list of evaluation definitions for an application.

# Unlink an evaluation definition from a task

Source: https://docs.avidoai.com/api-reference/eval-definitions/unlink-an-evaluation-definition-from-a-task

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/definitions/unlink

Removes the association between an evaluation definition and a task.

# Unlink evaluation definitions from the application

Source: https://docs.avidoai.com/api-reference/eval-definitions/unlink-evaluation-definitions-from-the-application

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/definitions/unlink-application

Removes the association between evaluation definitions and the application.

# Unlink evaluation definitions from topics

Source: https://docs.avidoai.com/api-reference/eval-definitions/unlink-evaluation-definitions-from-topics

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/definitions/unlink-topics

Removes the association between evaluation definitions and topics.

# Update an evaluation definition

Source: https://docs.avidoai.com/api-reference/eval-definitions/update-an-evaluation-definition

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/definitions/{id}

Updates an existing evaluation definition.

# Update task-specific config for an evaluation definition

Source: https://docs.avidoai.com/api-reference/eval-definitions/update-task-specific-config-for-an-evaluation-definition

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/definitions/{id}/tasks/{taskId}

Updates the task-specific configuration (e.g., expected output) for an evaluation definition on a specific task.

# List tests

Source: https://docs.avidoai.com/api-reference/evals/list-tests

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/tests

Retrieves a paginated list of tests with optional filtering.

# Create an experiment

Source: https://docs.avidoai.com/api-reference/experiments/create-an-experiment

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/experiments

Creates a new experiment with the provided details.

# Create an experiment variant

Source: https://docs.avidoai.com/api-reference/experiments/create-an-experiment-variant

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/experiments/{id}/variants

Creates a new variant for the specified experiment.

# Get an experiment

Source: https://docs.avidoai.com/api-reference/experiments/get-an-experiment

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/experiments/{id}

Retrieves a single experiment by ID.

# List experiment variants

Source: https://docs.avidoai.com/api-reference/experiments/list-experiment-variants

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/experiments/{id}/variants

Retrieves a paginated list of variants for the specified experiment.

# List experiments

Source: https://docs.avidoai.com/api-reference/experiments/list-experiments

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/experiments

Retrieves a paginated list of experiments with optional filtering.

# Trigger experiment variant

Source: https://docs.avidoai.com/api-reference/experiments/trigger-experiment-variant

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/experiments/{id}/variants/{variantId}/trigger

Triggers execution of all tasks associated with the experiment for the specified variant. Returns 204 No Content on success.

# Update an experiment

Source: https://docs.avidoai.com/api-reference/experiments/update-an-experiment

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/experiments/{id}

Updates an existing experiment with the provided details.

# Update experiment variant

Source: https://docs.avidoai.com/api-reference/experiments/update-experiment-variant

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/experiments/{id}/variants/{variantId}

Updates a specific experiment variant. Only title, description, and configPatch can be updated.

# Bulk create facts

Source: https://docs.avidoai.com/api-reference/facts/bulk-create-facts

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/facts/bulk

Creates multiple facts in a single request.

# Create a new fact

Source: https://docs.avidoai.com/api-reference/facts/create-a-new-fact

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/facts

Creates a new fact, optionally linked to topics.

# Delete a fact

Source: https://docs.avidoai.com/api-reference/facts/delete-a-fact

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml delete /v0/facts/{id}

Deletes a fact and its associated topic links.

# Get a single fact by ID

Source: https://docs.avidoai.com/api-reference/facts/get-a-single-fact-by-id

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/facts/{id}

Retrieves detailed information about a specific fact.

# List facts

Source: https://docs.avidoai.com/api-reference/facts/list-facts

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/facts

Retrieves a paginated list of facts with optional filtering.

# Update a fact

Source: https://docs.avidoai.com/api-reference/facts/update-a-fact

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/facts/{id}

Updates the statement, scope, or status of an existing fact.

# Send product feedback

Source: https://docs.avidoai.com/api-reference/feedback/send-product-feedback

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/feedback

Dispatches a feedback email to the Avido team via the Loops transactional email integration. The authenticated user's email is attached automatically.

# Delete a file processing

Source: https://docs.avidoai.com/api-reference/file-processings/delete-a-file-processing

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml delete /v0/file-processings/{id}

Deletes a file processing record by the given ID

# List file processings

Source: https://docs.avidoai.com/api-reference/fileprocessings/list-file-processings

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/file-processings

Retrieves a paginated list of file processings with optional filtering.

# Create an inference step

Source: https://docs.avidoai.com/api-reference/inference-steps/create-an-inference-step

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/inference-steps

# List inference steps

Source: https://docs.avidoai.com/api-reference/inference-steps/list-inference-steps

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/inference-steps

# Ingest events

Source: https://docs.avidoai.com/api-reference/ingestion/ingest-events

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/ingest

Ingest an array of events (traces or steps) to store and process.

# Ingest OTLP traces

Source: https://docs.avidoai.com/api-reference/ingestion/ingest-otlp-traces

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/otel/traces

Ingest OpenTelemetry Protocol (OTLP) traces in JSON format. Converts OTLP spans to Avido events and processes them through the standard ingestion pipeline. Supports OpenInference semantic conventions for LLM, tool, retriever, and other span types.

# Bulk update issues

Source: https://docs.avidoai.com/api-reference/issues/bulk-update-issues

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml patch /v0/issues

Updates status, priority, or assignment for multiple issues. Returns updated issues with assigned user information. User must be a valid organization member and not banned when assigning.

# Create a new issue

Source: https://docs.avidoai.com/api-reference/issues/create-a-new-issue

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/issues

Creates a new issue for tracking problems or improvements.

# Delete an issue

Source: https://docs.avidoai.com/api-reference/issues/delete-an-issue

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml delete /v0/issues/{id}

Deletes an existing issue permanently.

# Get a single issue by ID with duplicates

Source: https://docs.avidoai.com/api-reference/issues/get-a-single-issue-by-id-with-duplicates

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/issues/{id}

Retrieves detailed information about a specific issue, including all duplicate/child issues in the duplicates array with minimal fields (id, title, createdAt).

# Get suggested task for an issue

Source: https://docs.avidoai.com/api-reference/issues/get-suggested-task-for-an-issue

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/issues/{id}/suggested-task

Retrieves the DRAFT task associated with an issue of type SUGGESTED_TASK. This endpoint only works for issues that have a taskId and are of type SUGGESTED_TASK with a task in DRAFT status.

# List issues

Source: https://docs.avidoai.com/api-reference/issues/list-issues

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/issues

Retrieves a paginated list of issues with optional filtering by date range, status, priority, assignee, and more.

# List minimal issues

Source: https://docs.avidoai.com/api-reference/issues/list-minimal-issues

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/issues/minimal

Retrieves a paginated list of issues with only essential fields (id, title, status, source, priority, createdAt, assignedTo). Optimized for list views and performance. Supports sorting by createdAt and priority.

# Update an issue

Source: https://docs.avidoai.com/api-reference/issues/update-an-issue

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/issues/{id}

Updates an existing issue. Can be used to reassign, change status, update priority, or modify any other issue fields.

# Update and activate suggested task

Source: https://docs.avidoai.com/api-reference/issues/update-and-activate-suggested-task

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/issues/{id}/suggested-task

Updates the DRAFT task associated with an issue and activates it (changes status from DRAFT to ACTIVE). This endpoint only works for issues of type SUGGESTED_TASK with a task in DRAFT status. After activation, the task becomes a regular active task.

# Create model pricing

Source: https://docs.avidoai.com/api-reference/model-pricing/create-model-pricing

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/model-pricing

Creates a new model pricing entry

# Delete model pricing

Source: https://docs.avidoai.com/api-reference/model-pricing/delete-model-pricing

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml delete /v0/model-pricing/{id}

Deletes a model pricing entry

# Get model pricing

Source: https://docs.avidoai.com/api-reference/model-pricing/get-model-pricing

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/model-pricing/{id}

Retrieves a specific model pricing entry by ID

# List model pricing

Source: https://docs.avidoai.com/api-reference/model-pricing/list-model-pricing

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/model-pricing

Retrieves a paginated list of model pricing entries with optional filtering

# Update model pricing

Source: https://docs.avidoai.com/api-reference/model-pricing/update-model-pricing

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/model-pricing/{id}

Updates an existing model pricing entry

# Get organization config

Source: https://docs.avidoai.com/api-reference/organization-config/get-organization-config

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/org-config

Retrieves the organization configuration settings. Returns default values if no config has been saved yet.

# Update organization config

Source: https://docs.avidoai.com/api-reference/organization-config/update-organization-config

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/org-config

Updates the organization configuration settings. Creates a new config if one doesn't exist. Only updates fields that are provided in the request body.

# List organization members

Source: https://docs.avidoai.com/api-reference/organization-members/list-organization-members

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/organization-members

Retrieves the list of users who belong to the authenticated organization. Banned members are filtered out of the response. The `email` field is only returned to callers with `owner` or `admin` role — lower-privileged members receive the same payload without `email` to prevent directory enumeration.

# Create a quickstart

Source: https://docs.avidoai.com/api-reference/quickstartsv2/create-a-quickstart

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/quickstarts

Creates a new quickstart with the provided details.

# Get a quickstart

Source: https://docs.avidoai.com/api-reference/quickstartsv2/get-a-quickstart

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/quickstarts/{id}

Retrieves a single quickstart by ID.

# Get KB material counts for a quickstart

Source: https://docs.avidoai.com/api-reference/quickstartsv2/get-kb-material-counts-for-a-quickstart

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/quickstarts/{id}/kb-material-counts

Returns the number of knowledge base documents and scrape jobs associated with a quickstart.

# Get policy eval counts for a quickstart

Source: https://docs.avidoai.com/api-reference/quickstartsv2/get-policy-eval-counts-for-a-quickstart

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/quickstarts/{id}/policy-counts

Returns the total topics count, application-wide eval count, and per-topic eval counts for a quickstart. Includes both DRAFT and ACTIVE evals. Only evals created by this quickstart are counted.

# List documents for a quickstart topic

Source: https://docs.avidoai.com/api-reference/quickstartsv2/list-documents-for-a-quickstart-topic

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/quickstarts/{id}/topics/{topicId}/documents

Retrieves a paginated list of documents belonging to a specific topic within a quickstart.

# List quickstarts

Source: https://docs.avidoai.com/api-reference/quickstartsv2/list-quickstarts

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/quickstarts

Retrieves a paginated list of quickstarts with optional filtering.

# List tasks for a quickstart topic

Source: https://docs.avidoai.com/api-reference/quickstartsv2/list-tasks-for-a-quickstart-topic

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/quickstarts/{id}/topics/{topicId}/tasks

Retrieves a paginated list of tasks belonging to a specific topic within a quickstart.

# List topics for a quickstart

Source: https://docs.avidoai.com/api-reference/quickstartsv2/list-topics-for-a-quickstart

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/quickstarts/{id}/topics

Retrieves a paginated list of topics belonging to a specific quickstart.

# List topics for a quickstart with document statistics

Source: https://docs.avidoai.com/api-reference/quickstartsv2/list-topics-for-a-quickstart-with-document-statistics

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/quickstarts/{id}/document-topics

Retrieves a paginated list of topics belonging to a specific quickstart, enriched with document count and coverage statistics.

# Update a quickstart

Source: https://docs.avidoai.com/api-reference/quickstartsv2/update-a-quickstart

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/quickstarts/{id}

Updates an existing quickstart with the provided details.

# Create a new report

Source: https://docs.avidoai.com/api-reference/reports/create-a-new-report

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/reporting

Creates a new report.

# Delete a report

Source: https://docs.avidoai.com/api-reference/reports/delete-a-report

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml delete /v0/reporting/{id}

Deletes a specific report by its ID.

# Get a single report

Source: https://docs.avidoai.com/api-reference/reports/get-a-single-report

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/reporting/{id}

Retrieves a specific report by its ID.

# Get columns for a datasource

Source: https://docs.avidoai.com/api-reference/reports/get-columns-for-a-datasource

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/reporting/datasources/{id}/columns

Returns metadata about the whitelisted columns for a specific datasource that can be used for filtering in reporting queries.

# Get context-aware columns for a datasource

Source: https://docs.avidoai.com/api-reference/reports/get-context-aware-columns-for-a-datasource

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/reporting/datasources/{id}/columns

Returns available columns for a datasource based on the intent and current query context. For filters/groupBy/measurements intents, returns all eligible columns. For orderBy intent, returns columns based on current groupBy and measurements context.

# Get distinct values for a column

Source: https://docs.avidoai.com/api-reference/reports/get-distinct-values-for-a-column

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/reporting/datasources/{id}/columns/{columnId}/values

Returns a list of distinct values from a specific column in a datasource. For relation columns (e.g., topicId), returns the related records with their IDs and display names.

# Get eval stats aggregated by date

Source: https://docs.avidoai.com/api-reference/reports/get-eval-stats-aggregated-by-date

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/reporting/eval-stats/by-date

Aggregates eval pass/total/avg-score counts bucketed by the requested time granularity.

# Get eval stats aggregated by date and eval definition

Source: https://docs.avidoai.com/api-reference/reports/get-eval-stats-aggregated-by-date-and-eval-definition

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/reporting/eval-stats/by-date-and-definition

Aggregates eval pass/total/avg-score counts bucketed by time granularity and eval definition.

# Get eval stats aggregated by date and topic

Source: https://docs.avidoai.com/api-reference/reports/get-eval-stats-aggregated-by-date-and-topic

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/reporting/eval-stats/by-date-and-topic

Aggregates eval pass/total/avg-score counts bucketed by time granularity and topic.

# Get eval stats aggregated by eval definition

Source: https://docs.avidoai.com/api-reference/reports/get-eval-stats-aggregated-by-eval-definition

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/reporting/eval-stats/by-definition

Aggregates eval pass/total counts per eval definition across the time range. EXPERIMENT runs are excluded.

# List available datasources

Source: https://docs.avidoai.com/api-reference/reports/list-available-datasources

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/reporting/datasources

Returns a list of all available reporting datasources with their IDs and human-readable names.

# List reports

Source: https://docs.avidoai.com/api-reference/reports/list-reports

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/reporting

Retrieves a paginated list of reports with optional filtering by date range, assignee, and application.

# Query reporting data

Source: https://docs.avidoai.com/api-reference/reports/query-reporting-data

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/reporting/query

Queries reporting data from specified datasources with optional filters and groupBy clauses. Supports aggregation and date truncation for time-based grouping.

# Update a report

Source: https://docs.avidoai.com/api-reference/reports/update-a-report

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/reporting/{id}

Updates an existing report. Can be used to update title, description, or reassign/unassign the report.

# Get a single run by ID

Source: https://docs.avidoai.com/api-reference/runs/get-a-single-run-by-id

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/runs/{id}

Retrieves detailed information about a specific run.

# List runs

Source: https://docs.avidoai.com/api-reference/runs/list-runs

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/runs

Retrieves a paginated list of runs with optional filtering.

# Create a scrape job

Source: https://docs.avidoai.com/api-reference/scrape-jobs/create-a-scrape-job

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/scrape-jobs

# Create multiple scrape jobs

Source: https://docs.avidoai.com/api-reference/scrape-jobs/create-multiple-scrape-jobs

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/scrape-jobs/bulk

Create multiple scrape jobs at once from an array of URLs. Supports up to 2000 URLs per request. Each URL will be processed independently, with successful creations and failures reported separately.

# Delete a scrape job

Source: https://docs.avidoai.com/api-reference/scrape-jobs/delete-a-scrape-job

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml delete /v0/scrape-jobs/{id}

# Get a scrape job by ID

Source: https://docs.avidoai.com/api-reference/scrape-jobs/get-a-scrape-job-by-id

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/scrape-jobs/{id}

# List all scrape jobs

Source: https://docs.avidoai.com/api-reference/scrape-jobs/list-all-scrape-jobs

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/scrape-jobs

# Start scraping all pages of a scrape job

Source: https://docs.avidoai.com/api-reference/scrape-jobs/start-scraping-all-pages-of-a-scrape-job

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/scrape-jobs/{id}/start

# Update a scrape job

Source: https://docs.avidoai.com/api-reference/scrape-jobs/update-a-scrape-job

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/scrape-jobs/{id}

# Get install setup status

Source: https://docs.avidoai.com/api-reference/setup/get-install-setup-status

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/setup/status

Returns whether the install needs onboarding. An install needs onboarding when no organizations exist in the database; the dashboard uses this to show a sign-up-first bootstrap flow on fresh deployments. This endpoint is unauthenticated so the login UI can call it before the user has a session.

# Create a new style guide

Source: https://docs.avidoai.com/api-reference/style-guides/create-a-new-style-guide

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/style-guides

Creates a new style guide.

# Get a single style guide by ID

Source: https://docs.avidoai.com/api-reference/style-guides/get-a-single-style-guide-by-id

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/style-guides/{id}

Retrieves detailed information about a specific style guide.

# List style guides

Source: https://docs.avidoai.com/api-reference/style-guides/list-style-guides

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/style-guides

Retrieves a paginated list of style guides with optional filtering.

# Update a style guide

Source: https://docs.avidoai.com/api-reference/style-guides/update-a-style-guide

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/style-guides/{id}

Updates the content of an existing style guide.

# Create a new tag

Source: https://docs.avidoai.com/api-reference/tags/create-a-new-tag

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/tags

Creates a new tag with the provided information.

# Delete a tag

Source: https://docs.avidoai.com/api-reference/tags/delete-a-tag

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml delete /v0/tags/{id}

Deletes a tag by ID. This will also remove the tag from all documents.

# Get a single tag by ID

Source: https://docs.avidoai.com/api-reference/tags/get-a-single-tag-by-id

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/tags/{id}

Retrieves detailed information about a specific tag.

# List tags

Source: https://docs.avidoai.com/api-reference/tags/list-tags

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/tags

Retrieves a paginated list of tags with optional search filtering.

# Update an existing tag

Source: https://docs.avidoai.com/api-reference/tags/update-an-existing-tag

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/tags/{id}

Updates an existing tag with the provided information.

# Create or update a task schedule

Source: https://docs.avidoai.com/api-reference/task-schedules/create-or-update-a-task-schedule

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/tasks/schedule

# Delete task schedule

Source: https://docs.avidoai.com/api-reference/task-schedules/delete-task-schedule

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml delete /v0/tasks/{id}/schedule

# Get task schedule

Source: https://docs.avidoai.com/api-reference/task-schedules/get-task-schedule

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/tasks/{id}/schedule

# Get tags for a task

Source: https://docs.avidoai.com/api-reference/task-tags/get-tags-for-a-task

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/tasks/{id}/tags

Retrieves all tags assigned to a specific task.

# Update task tags

Source: https://docs.avidoai.com/api-reference/task-tags/update-task-tags

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/tasks/{id}/tags

Updates the tags assigned to a specific task. This replaces all existing tags.

# Add tags to multiple tasks

Source: https://docs.avidoai.com/api-reference/tasks/add-tags-to-multiple-tasks

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/tasks/tags

Add one or more tags to multiple tasks in a single request. All tasks and tags must exist and belong to the same organization.

# Bulk delete tasks

Source: https://docs.avidoai.com/api-reference/tasks/bulk-delete-tasks

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/tasks/delete

Deletes multiple tasks by their IDs.

# Bulk update tasks

Source: https://docs.avidoai.com/api-reference/tasks/bulk-update-tasks

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml patch /v0/tasks

Updates multiple tasks at once. When isVerified is set, recalculates parent topic verification.

# Create a new task

Source: https://docs.avidoai.com/api-reference/tasks/create-a-new-task

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/tasks

Creates a new task.

# Delete a task

Source: https://docs.avidoai.com/api-reference/tasks/delete-a-task

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml delete /v0/tasks/{id}

Deletes a single task by ID.

# Get a single task by ID

Source: https://docs.avidoai.com/api-reference/tasks/get-a-single-task-by-id

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/tasks/{id}

Retrieves detailed information about a specific task.

# Get task coverage statistics

Source: https://docs.avidoai.com/api-reference/tasks/get-task-coverage-statistics

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/tasks/task-coverage

Returns the percentage of active tasks that have at least one test run within the specified date range.

# Get task IDs

Source: https://docs.avidoai.com/api-reference/tasks/get-task-ids

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/tasks/ids

Retrieves a list of task IDs with optional filtering.

# Install a task template

Source: https://docs.avidoai.com/api-reference/tasks/install-a-task-template

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/tasks/template

Installs a predefined task template, creating tasks, topics, and eval definitions.

# List tasks

Source: https://docs.avidoai.com/api-reference/tasks/list-tasks

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/tasks

Retrieves a paginated list of tasks with optional filtering.

# Run a task

Source: https://docs.avidoai.com/api-reference/tasks/run-a-task

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/tasks/trigger

Triggers the execution of a task.

# Update an existing task

Source: https://docs.avidoai.com/api-reference/tasks/update-an-existing-task

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/tasks/{id}

Updates an existing task with the provided information.

# Upload tasks via CSV file

Source: https://docs.avidoai.com/api-reference/tasks/upload-tasks-via-csv-file

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/tasks/upload-csv

Uploads a CSV file containing tasks. The file will be validated and processed asynchronously.

# Get a single test by ID

Source: https://docs.avidoai.com/api-reference/tests/get-a-single-test-by-id

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/tests/{id}

Retrieves detailed information about a specific test.

# Bulk update topics

Source: https://docs.avidoai.com/api-reference/topics/bulk-update-topics

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml patch /v0/topics

Updates multiple topics at once. When isTasksVerified is set, cascades to all tasks in the topics.

# Create a new topic

Source: https://docs.avidoai.com/api-reference/topics/create-a-new-topic

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/topics

Creates a new topic.

# Delete a topic

Source: https://docs.avidoai.com/api-reference/topics/delete-a-topic

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml delete /v0/topics/{id}

Deletes a topic. By default, tasks associated with the topic will have their topicId set to null. Use cascade=true to explicitly delete all associated tasks.

# Get a single topic by ID

Source: https://docs.avidoai.com/api-reference/topics/get-a-single-topic-by-id

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/topics/{id}

Retrieves detailed information about a specific topic.

# List topics

Source: https://docs.avidoai.com/api-reference/topics/list-topics

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/topics

Retrieves a paginated list of topics with optional filtering.

# Update a topic

Source: https://docs.avidoai.com/api-reference/topics/update-a-topic

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml put /v0/topics/{id}

Updates a topic, including assigning or unassigning a user.

# Get a single trace by ID

Source: https://docs.avidoai.com/api-reference/traces/get-a-single-trace-by-id

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/traces/{id}

Retrieves detailed information about a specific trace.

# Get a single trace by Test ID

Source: https://docs.avidoai.com/api-reference/traces/get-a-single-trace-by-test-id

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/traces/by-test/{id}

Retrieves detailed information about a specific trace.

# List Trace Sessions

Source: https://docs.avidoai.com/api-reference/traces/list-trace-sessions

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/traces/sessions

Retrieve traces grouped by referenceId with aggregated metrics.

# List Traces

Source: https://docs.avidoai.com/api-reference/traces/list-traces

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/traces

Retrieve traces with associated steps, filtered by application ID and optional date parameters.

# Create or update webhook configuration for an application

Source: https://docs.avidoai.com/api-reference/webhook/create-or-update-webhook-configuration-for-an-application

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/applications/{id}/webhook

Creates a webhook for an application if one does not exist, otherwise updates the existing webhook URL and/or headers.

# Delete webhook configuration for an application

Source: https://docs.avidoai.com/api-reference/webhook/delete-webhook-configuration-for-an-application

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml delete /v0/applications/{id}/webhook

Deletes the webhook configuration for a specific application.

# Get webhook configuration for an application

Source: https://docs.avidoai.com/api-reference/webhook/get-webhook-configuration-for-an-application

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml get /v0/applications/{id}/webhook

Retrieves the webhook configuration for a specific application. Returns null if no webhook is configured.

# Test webhook configuration for an application

Source: https://docs.avidoai.com/api-reference/webhook/test-webhook-configuration-for-an-application

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/webhook/test

Sends a test webhook request to the configured webhook URL with a sample payload. Returns success status and any error details.

# Validate an incoming webhook request

Source: https://docs.avidoai.com/api-reference/webhook/validate-an-incoming-webhook-request

https://app.stainless.com/api/spec/documented/avido/openapi.documented.yml post /v0/validate-webhook

Checks the body (including timestamp and signature) against the configured webhook secret. Returns `{ valid: true }` if the signature is valid.

# Changelog

Source: https://docs.avidoai.com/changelog

Track product releases and improvements across Avido versions.

Avido v0.9.1 is a focused quality update, fixing layout issues on the dashboard and improving the interface across traces and data imports.

## Dashboard Layout Fix

The dashboard no longer shows a duplicate header when your application has no data yet. The layout now renders cleanly from the start, so new users get a polished first impression.

## Updated Traces View

The test page now uses the latest traces view, giving you a more consistent experience when reviewing trace data across different parts of the product.

## Scrollable Import Table

The URL table on the web scraper import page is now fully scrollable, making it easy to work with longer lists of URLs without the page cutting off content.

Along with security patches, container updates, certificate fixes, and infrastructure improvements.

Avido v0.9.0 brings a faster path from task analysis to experimentation, puts traces front and center in the navigation, and delivers a wave of quality improvements across the dashboard, evaluations, and webhook delivery.

## Create Experiments from Selected Tasks

You can now select tasks directly on the Tasks page and launch a new experiment with those tasks pre-filled. No more switching between pages or re-entering task details. Select, configure, and run.

## Traces in Navigation

Traces now have a dedicated page in the main navigation menu. System prompts are automatically extracted and displayed inside trace details, giving you full visibility into every LLM step without digging through raw spans.

## Dashboard & Analytics

* The date range filter on the dashboard now works correctly for 7-day, 14-day, and custom ranges.

* The At Glance page now supports series breakdown and fullscreen mode, consistent with the Insights page.

* Improved filtering on the Tests page removes the previous 100-task limit and adds tag filtering.

## Experiments Polish

* The max tokens slider is now easier to control precisely.

* Accidentally closing the baseline configuration drawer no longer resets your settings.

* Experiment variants no longer extend off-screen on the Experiments page.

* New documentation for the Experiments feature is now available.

## Traces & Evaluations

* The trace detail drawer is now resizable on the Timeline tab.

* You can select and copy text inside the trace drawer.

* Custom evaluation definitions can now be updated reliably.

## Topics & Tasks

* Topics can now be renamed directly.

* Updating a task's topic now refreshes the task list immediately.

* Improved button alignment when creating new tasks.

* Added Ctrl+A and Shift+click range selection for table items.

## Webhook Reliability

* Webhook delivery now supports per-organization throttling, preventing overload for high-volume integrations.

* Fixed applicationId handling in SDK webhook validation.

Along with security patches, container updates, certificate fixes, and infrastructure improvements.

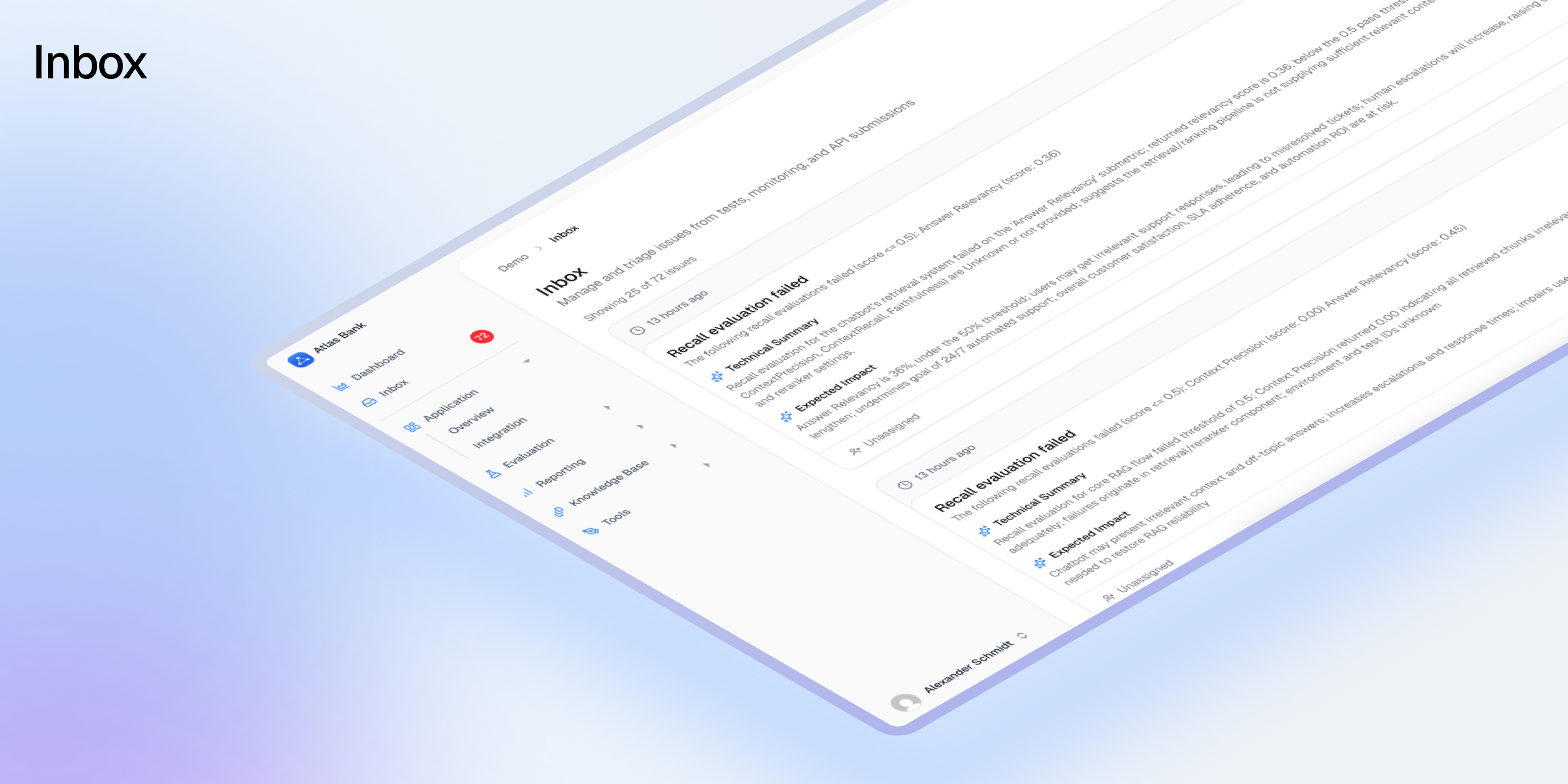

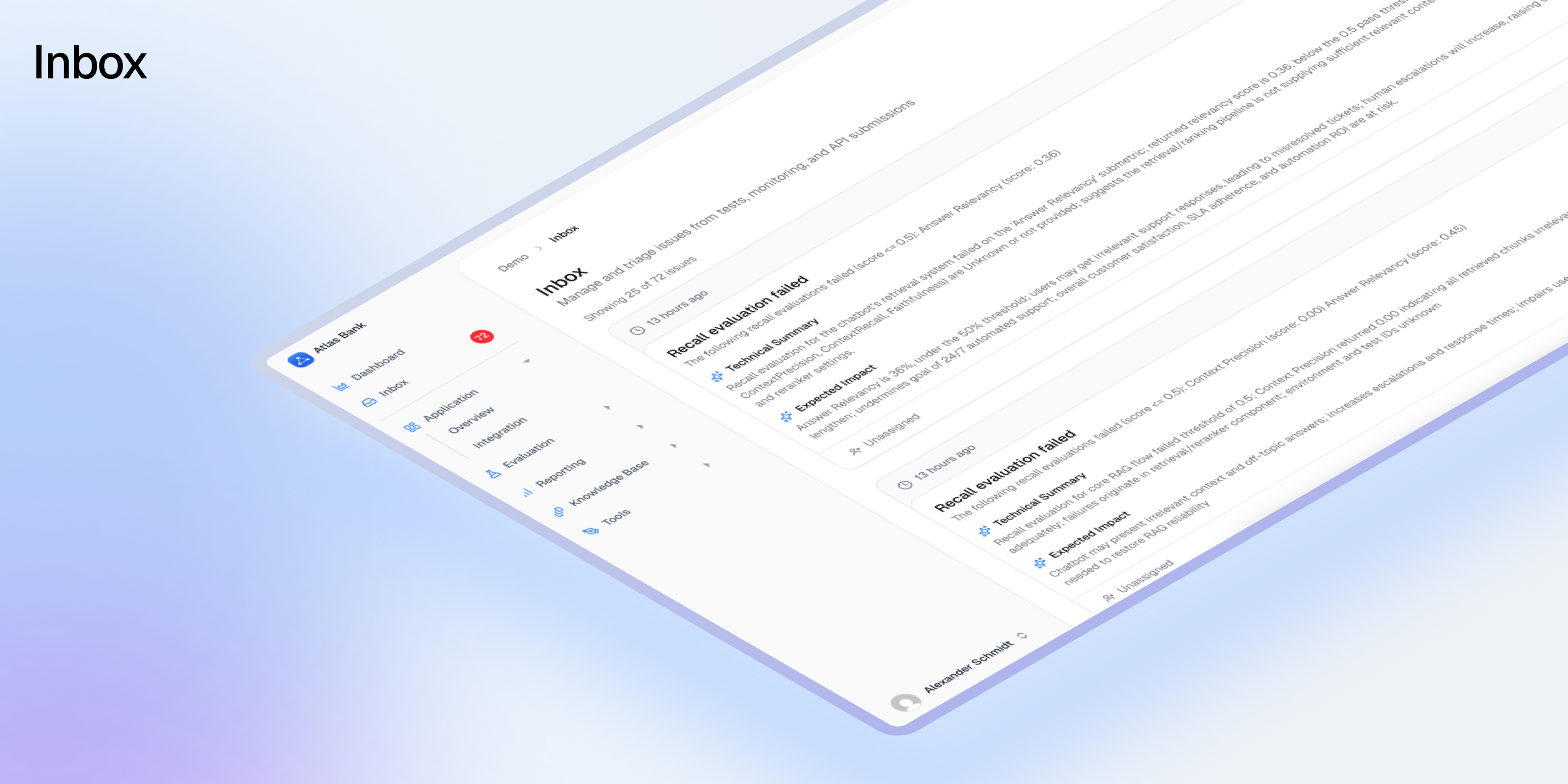

Avido v0.8.0 ships a completely redesigned Inbox, better trace capture for agentic workflows, webhook authentication, and a batch of quality-of-life improvements.

## Inbox v2: Your AI Quality Command Center

The Inbox is where AI quality issues surface. Version 1 was a list. Version 2 is a command center.

We rebuilt the Inbox from scratch because the original didn't scale. When you're running dozens of evaluations across multiple AI applications, a simple scrolling list of issues stops working. You can't find what matters. You can't act on what you find. And you definitely can't triage 200 issues efficiently.

Inbox v2 fixes all of that.

### See Everything at Once

A new split-panel layout gives you a compact issue list on the left and full details on the right. Click any issue and you immediately see the business summary, technical summary, severity, source, and suggested actions. No more clicking into a page, reading it, clicking back, clicking the next one.

Every issue shows its severity, age, type, and cluster size at a glance. You can scan 50 issues in the time it used to take to review 5.

### Find What Matters

Filter by status (open, resolved, dismissed), severity (critical to low), type (bug, human annotation, suggested task), or source (test failure, system alert, API, human annotation). Sort by newest, oldest, or severity. Every filter combination is shareable via URL, so you can bookmark "critical open bugs" or share a filtered view with a colleague.

The default view shows open issues, newest first. For most teams, that's exactly right. Customize when you need to.

### Act Without Leaving

This is the big one. When Avido surfaces a suggested task from a pattern of issues, you can now create that task directly from the Inbox. One click. The task is created in Avido with the suggested title and description, and the issue is marked resolved. No context-switching, no copy-pasting, no "I'll get to that later."

Dismiss issues you've reviewed and don't need to act on. Resolve the ones you've addressed. The issue lifecycle is simple: Open → Resolved or Dismissed.

### Work in Bulk

Select multiple issues and resolve, dismiss, or change severity on all of them at once. Shift-click for range select, Cmd/Ctrl-click to toggle individual issues. When you have 30 low-severity duplicates cluttering the view, clear them in two clicks.

A bulk action bar appears at the bottom whenever you have a selection active.

### Keyboard-First Navigation

For teams triaging daily, speed matters. Navigate with `j`/`k` or arrow keys. Open details with `Enter`. Go back with `Esc`. Toggle selection with `x`. Resolve with `r`, dismiss with `d`. Press `?` to see all shortcuts.

You can process an entire Inbox session without touching the mouse.

### Smarter Clustering

Issues that are duplicates or near-duplicates now cluster together automatically. The parent issue shows a count badge so you know there are related issues underneath. When you resolve or dismiss a parent, all issues in the cluster follow.

This means fewer duplicate issues cluttering your view, and when you take action on one, you're taking action on all of them.

### Built for Volume

Infinite scroll loads issues continuously as you move through the list. No pagination, no "load more" buttons. Whether you have 50 issues or 5,000, the experience stays fast.

## Enhanced Agentic Trace Capture

Trace ingestion now handles complex agentic workflows more accurately.

* **Multiple Traces per Request:** Sending multiple unrelated trace spans in a single request now correctly maps each to its own trace

* **Smarter Message Extraction:** User and assistant messages are extracted more accurately from agent steps

* **Full OpenInference Support:** All OpenInference span kinds are now captured and displayed

* **Validated for Production:** Trace mapping verified against real-world agentic architectures

## Webhook Authentication

* **Custom Auth Headers:** Configure authentication headers on your webhook integration. They're sent with every webhook request

* **Enable/Disable Toggle:** Turn webhooks on or off directly from organization settings

## Tests & Experiments

* **Select All Tasks:** Select all tasks at once when adding them to an experiment

* **Filter by Tag:** Filter tests by the associated task's tags on the Tests page

* **Test Type in Sidebar:** The test sidebar now shows whether a test is synthetic or monitoring

## Evaluation Improvements

* **Recall Eval Handles Empty Context:** When no context is retrieved (e.g. a correctly moderated question), recall evaluation now skips gracefully instead of failing

## Trace & UI Polish

* **Traces Sorted Newest First:** The trace list now defaults to most recent traces on top

* **Accurate Trace Duration:** Total duration calculations are now correct for multi-step traces

* **Task Creation Fixes:** Newly created tasks appear immediately. New topics are auto-selected. Creating a task without a topic no longer errors

* **Webhook Test Results:** Failed webhook tests now display correctly in the UI

* **Settings Tab Persistence:** The active tab in Settings/Integrations stays in the URL

* **Task Filter at Scale:** The task dropdown in test filters now loads beyond 100 items

Along with security patches, dependency upgrades, and infrastructure improvements.

Avido v0.7.1 polishes the trace experience introduced in v0.7.0, and ships updated SDKs with better developer experience and new APIs.

## Trace Explorer Polish

* **Redesigned Trace List:** The trace list page now uses the same table layout as tasks and tests, with right-aligned colored badges for latency, cost, and status. Consistent and scannable

* **Timeline Duration and Hierarchy:** The timeline view now shows step durations and groups related steps hierarchically. LLM calls, tool executions, and logs appear under their parent group instead of a flat list

* **Cleaner UI:** Trace views are more focused with less visual clutter

## Updated SDKs

* **New TypeScript SDK:** A new native TypeScript SDK with improved developer experience, better type safety, and full coverage of recent API additions

* **Python SDK Update:** The Python SDK ships with the latest API changes and improved ergonomics

Along with security patches, monitoring improvements, and infrastructure hardening under the hood.

Avido v0.7.0 adds full support for agentic AI workflows. You can now capture multi-step agent traces with cost and error tracking, explore them with advanced filtering and search, and debug them with a timeline view, annotations, and shareable links.

## Agentic Trace Capture

* **Agent and Chain Grouping:** Traces from agentic frameworks now preserve their full hierarchy. Agent loops, chain orchestrations, and tool calls display as nested groups instead of flat logs

* **Error and Status Tracking:** Every trace step now carries a status (success, error, timeout) with error messages visible inline. No more guessing which step failed

* **Cost Tracking:** LLM steps now track cost per call. Set up model pricing once, and costs are auto-computed from token counts on every ingest. Total cost rolls up to the trace level

* **Tool Call Correlation:** Parallel tool calls (common in Claude and OpenAI agents) now carry correlation IDs, so you can match each tool execution to the LLM request that triggered it

* **Session Grouping:** Multi-turn conversations are linked by session ID. See all traces from a single conversation thread in one view

* **OpenTelemetry GenAI Support:** The OTel ingestion pipeline now extracts GenAI semantic convention attributes: agent names, finish reasons, tool call IDs, conversation IDs, and error status

* **Faster Ingest:** Trace ingestion is significantly faster, especially for complex agent traces with many steps

## Trace Explorer

* **Advanced Filters:** Filter traces by session, cost range, duration range, error status, metadata key/value pairs, and evaluation score range

* **Full-Text Search:** Search across all trace step content: inputs, outputs, tool calls, errors, and metadata

* **Enriched Table:** The trace list now shows duration, token count, cost, status, session ID, and step count at a glance. Toggle columns to fit your workflow

* **Session View:** A dedicated sessions tab groups traces by conversation, showing trace count, time range, total cost, and error indicators per session

* **Eval Scores in Traces:** Traces linked to evaluations now display scores as color-coded badges in the drawer and as an average score column in the list

## Trace Debugging

* **Timeline Visualization:** A new waterfall view shows the temporal relationship between trace steps. Color-coded by type (LLM, tool, retriever, group), with hover details for duration, tokens, cost, and errors. Useful for spotting bottlenecks and parallel execution

* **Step Annotations:** Leave notes on individual trace steps for debugging or review. Annotation badges show which steps have notes

* **Deep Link Sharing:** Copy a link to a specific trace and step. Opening it auto-scrolls to the right place with a highlight animation

* **Export:** Download any trace as JSON (full data) or Markdown (formatted narrative with summary table) for offline analysis or bug reports

## Improvements

* **New SDKs:** A new TypeScript SDK and a new version of the Python SDK, both fully up to date with the latest API

Along with security patches, dependency updates, infrastructure hardening, and reliability improvements under the hood.

Avido v0.6.1 is a focused round of fixes and improvements across the platform — better experiment workflows, faster page loads, and a more polished experience throughout.

## Trace Accuracy

* **Correct Trace Hierarchy:** Fixed an issue where parent span IDs weren't properly respected, which could cause duplicate steps in your trace view. Traces now display the correct call hierarchy

## Experiments

* **Accurate Variant Comparison:** Variant comparisons now correctly show differences in percentage points instead of raw percentages

* **Locked Completed Experiments:** You can no longer accidentally create new variants on completed or archived experiments

* **Improved Navigation:** Removed a confusing trace link that sent users to a different page with no easy way back. Added a close button to the test modal for smoother workflows

## UI & Usability

* **Monitoring Badge:** Tasks with active monitoring now show a badge in the task table for quick visibility

* **Active Filter Indicator:** An icon now signals when you have filters applied, so you always know what's shaping your view

* **Settings Navigation:** Added a back-to-app button in User Settings

* **Create Documents Button:** Fixed a layout issue that hid the create button on the documents page

* **Copy Application ID:** You can now copy your application ID from the integration page as expected

* **Side Sheet Fixes:** Rounded borders render correctly. Task side sheets no longer reset your scroll position when opened

* **Task Failure Evaluation:** Triggered tasks now correctly evaluate failure conditions on run completion

Along with security patches, performance improvements, and reliability work under the hood.

Avido v0.6.0 brings native OpenTelemetry trace ingestion (beta), stronger authentication with 2FA and SSO support, and a broad sweep of reporting and UI improvements across the platform.

## OpenTelemetry Trace Ingestion (Beta)

Avido now natively ingests OpenTelemetry traces, making it dramatically easier to connect your existing AI systems. If you're already using frameworks like Vercel AI SDK, LangChain, or LlamaIndex, you can start sending traces to Avido without writing custom integration code.

### Key Capabilities

* **OTLP HTTP Endpoint:** New `POST /v0/otel/traces` endpoint accepts standard OTLP JSON payloads. Plug in your existing OpenTelemetry setup and start sending traces immediately

* **Automatic Span Classification:** LLM, tool, retriever, and chain spans are automatically mapped to Avido's trace model with full attribute extraction

* **Latency Analysis:** New duration and timing fields on trace steps for precise performance tracking across your AI pipeline

This is a beta release and we'd love your feedback as you integrate. Reach out if you need help connecting your stack.

## Authentication & Security

* **Two-Factor Authentication:** TOTP-based 2FA is now available for all users, with backup recovery codes

* **SSO Support:** Single sign-on support for enterprise deployments

## Reporting & Insights

* **Flexible Filtering & Grouping:** Eval results can now be filtered and grouped by any combination of eval, task, topic, or tag

* **Improved Report Creation:** Clear feedback when creating reports-easily distinguish between missing configuration and empty results

* **Editable Descriptions:** Add or edit descriptions on existing Insights reports at any time

* **Empty Series Toggle:** Hide or show time points with no data in chart breakdowns for cleaner visualizations

* **Last 12 Months Preset:** New date filter preset for rolling 12-month reporting windows

## UI Polish

* **Scroll & Layout Fixes:** Restored proper scrolling across experiment detail pages, style guide editor, and topic selection

* **Sidebar Navigation:** Accurate active states, properly fitted workspace names, and visible application selector

* **Experiment Lifecycle:** Enhanced archive/restore flow with confirmation dialogs and status tracking

* **Copy to Clipboard:** Consistent copy behavior across the platform

* **Pagination:** Changing filters now correctly resets to page 1

Along with security, performance, and reliability improvements to make Avido faster and more stable for you.

Avido v0.5.0 introduces Emerging Use Case Tracking: The first feature in Avido's Monitoring module that automatically discovers how users actually use your AI in production. This release empowers teams to identify gaps between their test coverage and real-world usage before those gaps become incidents.

## Emerging Use Case Tracking

Emerging Use Case Tracking examines sampled production traffic, clusters repeated patterns, and surfaces suggestions for user behaviors that aren't covered by your existing tasks. Privacy-first, human-in-the-loop, built for regulated industries.

### Key Capabilities

* Automatic Pattern Discovery: Sample production sessions, extract user intent, and cluster similar requests together—one-off queries filtered automatically